I recently used GPTHuman AI Review and I’m confused about what the results actually mean and how accurate they are. Can someone explain how this tool works, how trustworthy its feedback is, and how I should use its insights to improve my content or decisions?

GPTHuman AI review from someone who burned time and throwaway emails on it

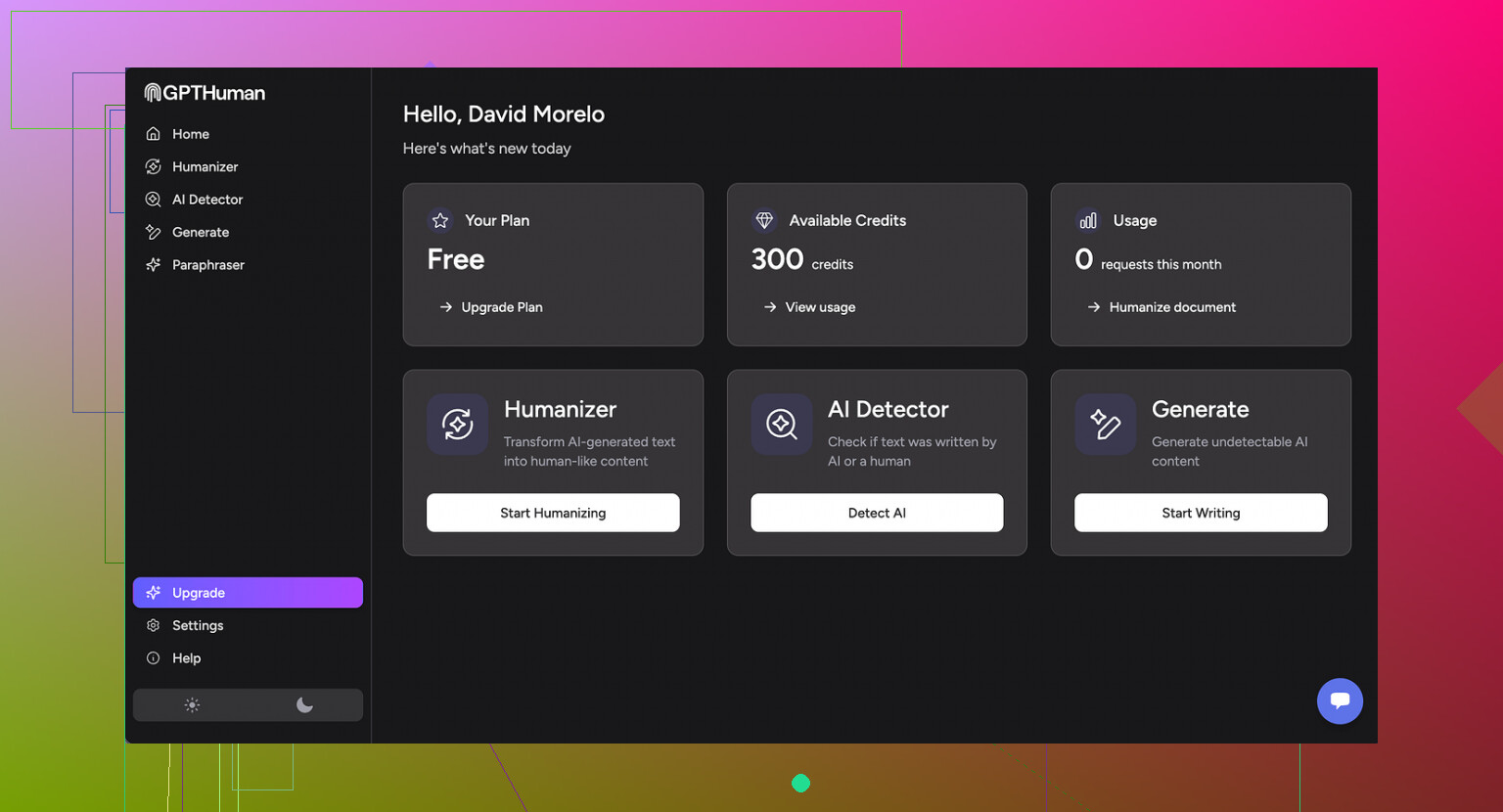

GPTHuman AI review

I tried GPTHuman because of the line on their page about being “the only AI humanizer that bypasses all premium AI detectors”. I wanted to see if that held up under a basic test, nothing fancy.

Short version, it did not.

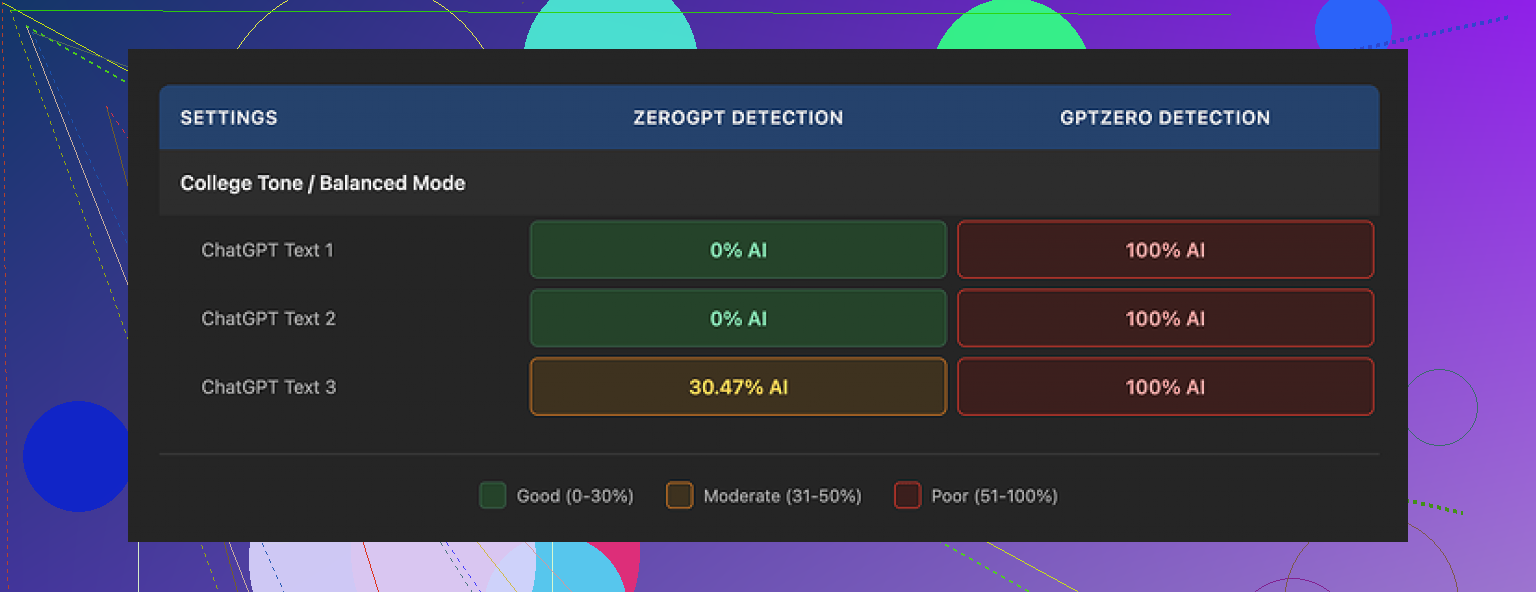

I ran three different texts through GPTHuman, then checked all outputs with external detectors:

• GPTZero flagged every single “humanized” version at 100% AI. No borderline calls, no mixed result, just full AI every time.

• ZeroGPT scored two of the samples as 0% AI, which at first looked decent, then called the third one out with an AI probability around 30%. Not exactly “bypasses all detectors”.

The weird part, GPTHuman’s own internal “human score” told a different story. Their meter showed strong passing scores on all outputs, while the external tools were calling them AI. So the built-in rating did not match what matters in practice, which is how external tools treat the text.

On readability, the paragraphs look ok at first glance. The structure feels like something you could paste into an email or blog post. Once you read closely, it starts to fall apart:

• Subject–verb agreement errors all over

• Sentences that trail off without finishing the idea

• Word swaps that break the meaning or sound off in context

• Some endings that felt almost scrambled, like the last lines were stitched together from the wrong phrases

For anything serious, I would not trust this unedited. You would need to manually fix grammar and clarity, which defeats the “one‑click humanizer” idea.

Pricing and limits I hit

The free tier surprised me in a bad way. It is not 300 words per run, it is about 300 words total usage. After that, it locks you out.

I ran into that cap so quickly I ended up spinning up three new Gmail accounts to finish my normal test set. If you plan to run a batch of articles, you will hit a wall almost instantly unless you pay.

Paid plans (pricing when I tested):

• Starter: from $8.25 per month on an annual plan

• “Unlimited”: $26 per month

The “Unlimited” label is a bit misleading. Each run still maxes out at 2,000 words. So if you have long-form content, you have to split it, process in chunks, then stitch everything together and hope the tone does not jump around too much.

Some policy notes worth reading before you hand them anything:

• Payments are non‑refundable, so if you do not like the results, you are stuck

• Your text is opted in for training by default, you need to manually opt out

• They keep the right to use your company name in their promo material unless you tell them not to

None of this is hidden, but it is easy to skip if you click through fast.

What ended up working better

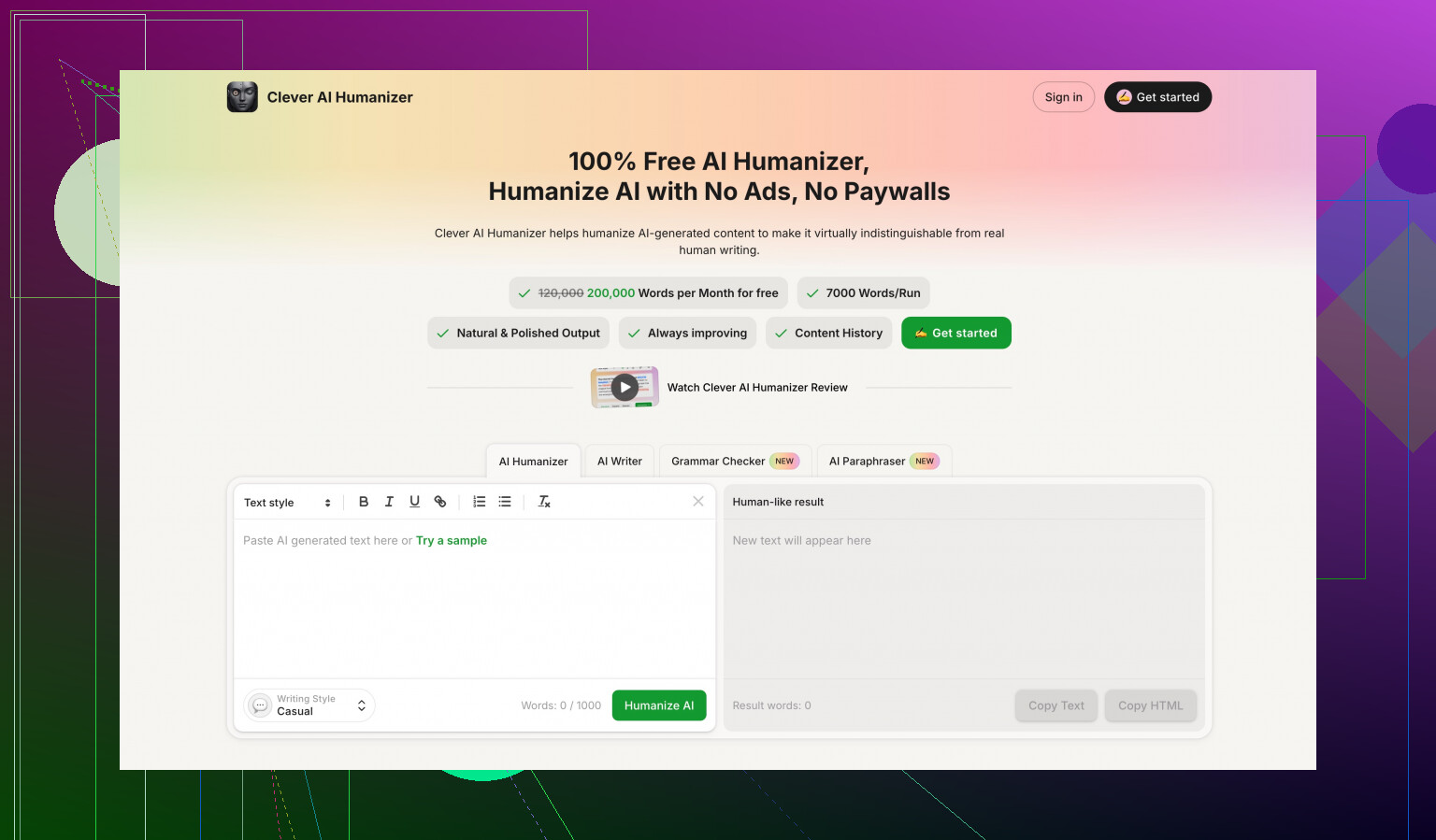

While I was benchmarking tools, I compared GPTHuman with Clever AI Humanizer, running the same source texts through both and checking detectors.

Clever AI Humanizer did better in two ways:

• Stronger scores on external AI detectors, more texts passing, fewer partial flags

• Fully free access, no hard word cap like the 300‑word total limit, and no juggling burner emails

If you want to see the detailed breakdown they posted, it is here:

If you are deciding where to spend time, my experience was:

GPTHuman

• Struggled with GPTZero

• Had mixed results with ZeroGPT

• Introduced a lot of grammatical noise

• Locked down the free tier very fast

• Came with data use and promo clauses you need to manually avoid

Clever AI Humanizer

• Scored stronger in my tests

• Stayed accessible without paywalls during the same runs

If you go with GPTHuman anyway, treat the output as a rough draft. Run it through a grammar checker, read it out loud, and always, always test with the same detectors your client, school, or platform uses.

Short version on GPTHuman AI Review and its “scores”:

-

What the GPTHuman scores mean

Their internal “human score” is mostly a proprietary meter. It reflects how their own system thinks the text looks, not how external detectors treat it.

So if GPTHuman says 90 percent human, it only means it passes their internal checks. It does not guarantee GPTZero, ZeroGPT, or your school or client detector will agree. -

Why your results feel off

AI detectors disagree with each other a lot.

Example from tests similar to what @mikeappsreviewer did:

• GPTZero often flags long, fluent, low‑error text as AI, even if a person wrote it.

• ZeroGPT sometimes shows partial AI probability on the same paragraph.

GPTHuman tries to push your text into a “messier” style, which sometimes helps with some detectors, but it introduces grammar issues and weird phrasing. So you get text that looks more human to their system, but not cleaner or safer for you. -

How accurate or “trustworthy” it is

You need to separate three things:

• Grammar quality: GPTHuman output often needs edits. Subject verb issues, odd word choices, broken flow. You should not paste it raw into work, school, or client docs.

• Detection evasion: Mixed. For some detectors and some texts you might see lower AI scores. For tougher detectors like GPTZero, results are unreliable.

• Their internal score: Only useful as a rough internal gauge. It does not reflect what external tools will report.

I disagree a bit with the idea that the tool is useless if you need to edit. If you struggle to change tone or structure, GPTHuman can serve as a messy first draft. Then you clean it up by hand or with a grammar tool. That said, if your only goal is “pass AI detection at all costs”, the value is lower.

-

How to use its insights without fooling yourself

If you keep using it, try this workflow:

• Step 1: Run your text through GPTHuman.

• Step 2: Immediately check the result with the same detector your teacher, client, or platform uses. Not a random different one.

• Step 3: Manually fix grammar, clarity, and any oddly worded sentences. Read it out loud. If it sounds robotic or off, detectors might still flag it.

• Step 4: Re‑run the edited version through the detector.

If the detector still flags it high, do not rely on GPTHuman for that use case. -

How to interpret conflicting detector scores

If GPTHuman says 95 percent human, GPTZero says 100 percent AI, and ZeroGPT says 30 percent AI, treat the strictest tool as your “truth”, because that is the one that will hurt you.

So if your school uses GPTZero and that tool screams AI, the GPTHuman meter is noise for you. -

Privacy and policy angle

You mentioned “how you should use its insights”, so this matters.

GPTHuman text is opted in for training by default. Payments are non refundable. They also reserve the right to use your company name in promo material unless you tell them not to.

So do not paste confidential contracts, unpublished manuscripts, or anything sensitive. Use generic or redacted versions if you need to test. -

What to do instead or in addition

If your goal is more natural text, not only detection evasion, then:

• Write your own draft in your normal voice.

• Use any AI tool for structure or ideas.

• Edit yourself to add your own examples, small mistakes, and personal details.

Detectors tend to drop when the text reflects specific experience, not generic statements.

If your focus is “AI detector friendly text”, tools like Clever Ai Humanizer have scored better in some public benchmarks, including the one linked by @mikeappsreviewer. You still need to test with your own detector, but it might give you better starting output without hitting a tiny free cap.

- Practical rule of thumb

Treat GPTHuman scores as a hint, not a verdict.

The only scores that matter are:

• The detector your target uses.

• Your own quality check for grammar and clarity.

Use it as a rough helper, not as a guarantee of “safe” or “human” text.

Short version: treat GPTHuman’s “human score” as marketing fluff, not a safety check.

A few extra angles that build on what @mikeappsreviewer and @vrijheidsvogel already showed:

-

What the GPTHuman meter is really telling you

It’s basically an internal “similar-to-our-target-style” score. It is not calibrated to any specific external detector.

So if you’re thinking “90% human means I’m safe for GPTZero / ZeroGPT / my school LMS,” that’s where the confusion starts. It’s more like a vibe score than a compliance score. -

Why it often feels off in real use

Their trick seems to be:

- Break up patterns that detectors associate with polished LLM text

- Inject noise: odd word choices, slight grammar errors, broken flow

That sometimes drops scores on certain detectors, but it also:

- Makes your writing look less competent

- Can increase suspicion from a human reviewer (“this reads like bad AI trying to be human”)

So the problem is: you’re trading one risk (detector flag) for another (low‑quality, weird text).

- How “trustworthy” is it, practically

I’d split it like this:

- Trust it for: “show me a messier, less-LM-ish variant of this paragraph”

- Don’t trust it for: “this will pass the detector my job / uni uses”

Also, detectors themselves are unstable. They change models, thresholds, and even UI over time. So any tool claiming “bypasses all premium detectors” is overselling. If it really did that reliably, it would not stay public for long.

- Where I slightly disagree with the others

I don’t think the answer is always “use it only as a rough draft.” In some cases, GPTHuman makes the text so awkward that editing it back to something decent takes longer than just rewriting from scratch in your own voice.

If you’re decent at writing, you’ll probably get better, safer results by:

- Writing your own base text

- Using AI for idea support / structure

- Then injecting very specific personal details, examples, and small tangents

Detectors struggle more with content that includes genuine personal specifics, not generic reformulations.

- How to actually use its “insights” without getting burned

Instead of obsessing over their human score:

- Look at what it changes: sentence length, phrasing, grammar noise

- Decide what you want to keep: maybe the varied sentence structure, but not the broken grammar

- Reverse engineer those patterns into your own manual editing

That way GPTHuman becomes a “style lab” rather than a magic filter.

- Privacy & policy angle that really matters

Given:

- Auto opt‑in for training

- Non‑refundable payments

- Right to use your company name in promo by default

I personally would never paste anything sensitive, graded, or under NDA. If you wouldn’t post it in a public Discord, I wouldn’t drop it into GPTHuman either.

- If evading detectors is your actual priority

You already saw they struggle with GPTZero and give mixed results on ZeroGPT in tests like the ones @mikeappsreviewer ran. If your real goal is “AI detection friendly text,” then:

- Use the same detector your target uses for your own checks. That is the only score that matters.

- Consider testing alternatives like Clever Ai Humanizer. In public comparisons it tends to produce fewer grammatical glitches and better scores on some detectors, and it’s a lot less stingy on free usage. Still not a silver bullet, but a less annoying sandbox.

- Mental model to keep you sane

Think of GPTHuman as:

- A noisy style transformer

Not as: - A legal shield

- A guaranteed bypass

- A reliable indicator of what your teacher / editor / HR system will see

If you treat its output as “maybe useful inspiration, definitely not a pass guarantee,” you’ll be much less disappointed and way less confused by those conflicting scores.

Think of GPTHuman as a noisy style filter, not a lie detector for AI content.

What’s actually going on:

-

Why GPTHuman feels “off”

It tries to break typical LLM patterns by injecting chaos: shorter sentences, odd word swaps, occasional grammar slips. Detectors sometimes see that as “more human,” but not consistently. That is why your GPTHuman score can look great while GPTZero screams 100 percent AI. The others already showed that in practice. -

How accurate is it really

I’d put it like this:

- Reliable for: showing how a more “imperfect” version of your text might look.

- Unreliable for: predicting what any specific detector or school platform will say.

Detectors are not standardized. Treat GPTHuman’s meter as an internal flavor gauge, not a truth meter.

- Where I slightly disagree with others

Some replies imply “just use it as a messy draft and edit.” That can work, but if you’re already a halfway decent writer, you may be better off editing your own draft to:

- Vary sentence length.

- Add specific, concrete personal details.

- Keep mild natural quirks without trashing grammar.

GPTHuman often breaks semantics or tone so much that fixing it is slower than just rewriting.

- How to interpret conflicting scores without going in circles

Instead of obsessing over 90 vs 95 percent “human”:

- Anchor on the one detector that actually matters for you (school, client, platform).

- Everything else, including GPTHuman’s internal score, is background noise.

If that detector hates your text, GPTHuman “passing” is irrelevant.

- Using tools without fooling yourself

A more sustainable mental model:

- Use AI tools for structure, ideas, or stylistic variation.

- Make the final pass yourself and deliberately inject your own experience, opinions, and specific examples.

Detectors tend to struggle more with text tied to real events in your life than generic, polished prose.

- About Clever Ai Humanizer

Since it came up already, here is a straight read, not hype:

Pros:

- Generally cleaner grammar than GPTHuman in many tests.

- Less stingy free access, which matters if you are experimenting a lot.

- Often produces text that needs fewer “this makes no sense” fixes.

Cons:

- Still not a magic “bypass everything” button. Tough detectors can still flag it.

- You can still get that slightly uniform AI feel if you do not add your own edits.

- Like any humanizer, it can encourage people to over rely on tools instead of learning to write naturally.

If your priority is readability plus somewhat lower AI detection risk, Clever Ai Humanizer is a more comfortable sandbox than GPTHuman’s glitchy style, but you still need to test against the specific detector that will judge you.

- How to actually use GPTHuman’s insights

Instead of taking its output at face value, treat it as something to reverse engineer:

- Notice how it changes phrasing, splits long sentences, and introduces small imperfections.

- Apply the useful parts manually to your own writing, without copying the broken grammar.

This way you get the “insight” (what detectors tend to like less) without shipping obviously mangled text.

In short: @vrijheidsvogel, @waldgeist, and @mikeappsreviewer are right to be skeptical of the “bypasses all detectors” claim. Use GPTHuman and similar tools as style experiments, not as guarantees. The only opinion that counts is the detector (and human reviewer) directly in front of you.