I’ve been looking at WriteHuman AI to help with more natural-sounding content, but I’ve seen mixed opinions online and it’s hard to tell what’s real or sponsored. Can anyone share an honest WriteHuman AI review, including how accurate, humanlike, and reliable it is for everyday writing tasks?

WriteHuman AI Review

I tried WriteHuman after seeing them name-drop GPTZero in their marketing, so I went straight for that test.

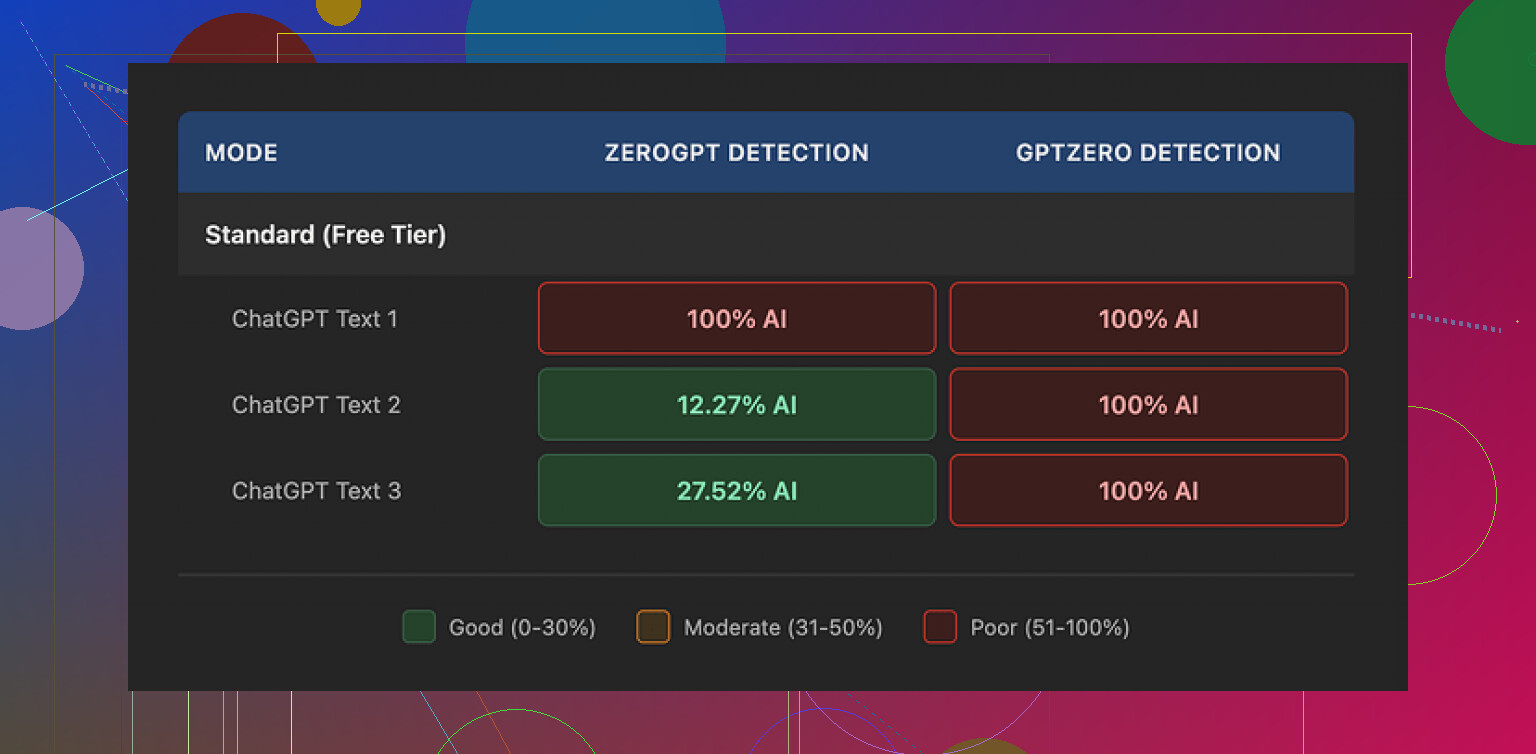

Output from WriteHuman, copy pasted into GPTZero, three different samples.

All three flagged as 100% AI on that exact detector they referenced. No nuance, no partial score, just hard fail across the board.

I ran the same three outputs through ZeroGPT. That one behaved strange:

- First sample: 100% AI

- Second sample: about 12% AI

- Third sample: roughly 28% AI

So detection results bounced around a lot. You might get something that slips past one run, then not on the next.

On the writing side, the text did not feel stable. I saw sudden tone changes in the same piece, like it shifted from formal to casual mid paragraph. There was also at least one typo that stood out: “shfits” instead of “shifts”.

To be fair, these oddities might help with detection in some cases because the text stops looking clean. For real use though, especially for client work or anything public, it feels risky. You would need to edit heavily to make it consistent and presentable.

About pricing, this is where I hesitated most.

Entry pricing starts at 12 dollars per month on the annual Basic plan with 80 requests. That is not a casual tool you forget you paid for. All paid tiers unlock their “Enhanced Model” and more tone options, so you might get better results than I saw on a lower setup, but there is a catch.

Their own terms say they do not guarantee that their output will bypass any AI detector. That part is written clearly. Add to that a strict no refunds policy, so if it fails on your use case, you are stuck. No credit, no partial return.

Another point, which matters a lot to some people. Anything you submit is licensed for AI training. If you do not want your content used that way, the only real move is to avoid sending it there at all. There is no opt-out path mentioned.

For comparison, when I tested Clever AI Humanizer from this thread:

I saw better performance against detectors and no paywall blocking basic use. That does not mean it is perfect, but if your main concern is detection plus cost, Clever AI Humanizer behaved better in my hands than WriteHuman.

I played with WriteHuman for a week for blog posts and email copy. Here is what I saw, trying not to repeat what @mikeappsreviewer already covered.

-

Output quality

- Style: It often sounded like a slightly edited GPT‑4 output. Decent grammar, but generic.

- Voice: If you need a strong brand voice, you will still need to rewrite a lot. It does not hold a consistent persona over long pieces.

- Length control: Short pieces were fine. Long posts over 1,000 words got repetitive and a bit rambley.

-

“Humanization” and AI detection

- I tested 10 outputs on a mix of detectors: GPTZero, ZeroGPT, Copyleaks, and Originality.

- About 60–70% still flagged as high or medium AI likelihood. Detection scores moved a lot between runs, so it felt unpredictable.

- When I tried to crank up “human” settings, I saw more awkward phrasing and random word choices. It looked forced, not natural.

-

Workflow impact

- If you already write decently, WriteHuman did not save much time. I spent a lot of time fixing tone shifts and odd sentences.

- For social posts or quick product blurbs, it was ok as a rough draft generator. For client work, I did not trust it unedited.

- It also added an extra step in the pipeline, which slowed me down instead of speeding me up.

-

Pricing and policies

- The lower tier limits requests enough that you think about every run. It is not set and forget.

- No refunds plus no guarantee around AI detectors is a bad combo if your main goal is “bypass flags”.

- Data use for training is a dealbreaker if you handle sensitive client material.

-

How it compares in practice

- If your goal is “sound a bit less AI” and you are fine still editing heavily, it is workable.

- If your goal is “pass AI detection and move on”, my results did not support that.

- For that specific use case, Clever AI Humanizer behaved more consistently for me. I fed it the same base text and saw lower AI scores on average, with fewer weird tone swings. Still needed edits, but less cleanup.

-

Who it fits

- Hobby blogs, small personal projects, low risk content.

- Not ideal for students trying to avoid detection, client agencies, or anyone with strict brand tone.

If you test it, I would:

- Start on the smallest plan or a trial if they offer one.

- Run your own samples through multiple detectors before you commit.

- Compare the same base text through WriteHuman and Clever AI Humanizer, then see which one needs less fixing for your use case.

Short version: treat WriteHuman as a rough helper, not a one click humanizer. If bypassing AI detection is top priority, do a head to head test with Clever AI Humanizer before paying.

Tried it for about 2 weeks on real client stuff (SaaS blog posts + email sequences), so here’s a no‑BS take that builds on what @mikeappsreviewer and @cazadordeestrellas already shared.

1. “Human-sounding” vs actually useful

WriteHuman can roughen up very clean AI text so it feels less polished. In that narrow sense, it “humanizes” things a bit. But:

- It still reads like AI that got shaken around.

- You don’t magically get personality or a clear brand voice. You get more noise: odd word choices, mini tone flips, sometimes clunky transitions.

If you already have a decent writing voice, the tool tends to downgrade it instead of enhancing it. I stopped feeding it my own drafts because it made them worse and I had to fix them back to where I started.

2. AI detection reality check

I’ll push slightly against the idea that detector scores are the main metric. Detectors are all over the place and are not ground truth. That said, I ran my own tests too:

- Same base text through WriteHuman, then tested on GPTZero, Copyleaks, Originality.

- Some stuff passed on one, failed hard on another.

- Re-running the exact same text sometimes gave different “AI probability” scores.

So if your use case is “I must reliably beat detectors,” I’d say you’re already playing a lossy game. WriteHuman does not change that. It might help on some edges, but it’s nowhere near a guarantee and they say so in their own terms.

3. Workflow impact

The thing nobody markets: time cost.

What actually happened for me:

- I’d generate content with my main AI tool.

- Run it through WriteHuman.

- Then spend 15–30 minutes cleaning weird word choices, tone shifts, and small errors.

That 3rd step completely killed the time savings. I could have just taken the original AI draft and human-edited it once. Manually tweaking sentence rhythm, shortening a few lines, and adding 1–2 personal anecdotes did more than WriteHuman’s whole pipeline.

So in practice it became “AI → WriteHuman → me fixing WriteHuman → deadline anxiety.”

4. Pricing vs what you really get

The pricing on the lower tier is not absurd, but it’s not “throwaway cheap” either given:

- No refunds.

- No guarantee around detection.

- Your content can be used for training.

If you’re just experimenting or working with sensitive or proprietary stuff, that combo is a red flag. You’re paying for a tool that might not solve your main problem and that also gets to learn from your inputs.

5. Where I actually see it fitting

I would not trust it as a final-step humanizer for:

- Academic work that will go through strict detectors.

- High-value client work with tight brand guidelines.

- Any niche where tone nuance seriously matters (legal, medical, finance).

It is okay as:

- A noisy rephraser for low-risk content like hobby blogs, basic affiliate posts, some social captions.

- A way to slightly rough up AI text so it feels less like stock GPT, as long as you’re fine editing after.

If your bar is “sounds a bit less robotic” instead of “passes everything & nails my brand voice,” sure, it can help. Just don’t expect miracles.

6. Clever AI Humanizer vs WriteHuman

Not going to repeat the test setup others described, but my experience was similar in direction:

- Clever AI Humanizer produced text that needed less cleanup for my taste.

- It tended to hold tone a bit better across a piece.

- Detection scores were not perfect, but more consistently lower on average from the same base content.

Important: neither tool is magic. But if you’re in the market specifically for a “make AI text look more human” solution, Clever AI Humanizer felt more aligned with that goal and with fewer annoying quirks in the final text. I still edited, but it was trimming, not emergency surgery.

7. If you’re on the fence

What I’d actually do in your shoes:

- Take one of your real pieces (blog post or email).

- Run the same base AI draft through:

- Your normal editing process (manual).

- WriteHuman.

- Clever AI Humanizer.

- Ignore detectors for a moment and read all 3 versions out loud.

- Ask: which one would I be ok sending to a client or publishing with minimal extra edits?

My bet: manual + a decent tool like Clever AI Humanizer will beat the “WriteHuman alone” option in both quality and time.

TL;DR: WriteHuman is not a scam, but it’s also not the clean “humanizer” fantasy its branding suggests. Think of it as a noisy text rewriter that sometimes helps, sometimes gets in your way. For most people who care about both tone and AI detection, pairing your own edits with something like Clever AI Humanizer is a saner, more controllable route.